Machine Learning for the impatient

Toy around, learn, build your first machine learning project, and get resources for your next steps

There are quite a few uphill battles if you want to delve into machine learning: understanding basic statistics, probably needing to learn Python, getting to know all of the data science libraries like Pandas, having a grasp of what ML is and what it can do, and so on and so forth. While it’s all quite doable it can be pretty discouraging to jump so many hoops just to get something (at all!) done.

In this article I will present a number of AI/ML-related in-house micro projects we’ve completed at Humblebee as part of dipping our toes into ML. If you are a developer I think you should probably be able to complete these kinds of projects in an evening or so.

The tools I present are powerful, easy-to-use, rather conventional machine learning-based APIs. Custom functionality is still something you will (for the next few years) rely on building on your own, though advances like Google Cloud AutoML, Amazon Sagemaker Autopilot, and Azure Machine Learning Designer are paving the road to a crowd with less data scientist-heavy ranks.

Caveat that I am in no way an “expert” in the area with 10 years of experience and a dual-PhD in data science. However, as Google have been promoting aggressively, the area of ML cannot be and should not be entirely given up to only one specific subsector (data scientists) since it has a big overlap onto so many other areas as well — not the least, the spillover onto development.

Things you won’t need:

- Math wizardry skills

- A PhD degree

- An expensive, nitro-fueled computer

- Tedious local environments

- Bank full of money

Much has been written on the distinctions between regular programming, machine learning, and artificial intelligence. A helpful metaphor that I like is that programming is about solving problems based in logic, rules and flow. Machine learning is a way of using statistical models to understand (predict, often) new data from knowledge of previous data. Artificial intelligence is the wider field of computers autonomously operating on, and learning from, their environment.

I recommend reading Google’s short guide (~1 hour) to problem framing for machine learning, as this gives concrete examples and guidance on understanding what types of use cases and questions are relevant when looking if a problem is best solved with rule-based programming or with machine learning.

Helping Googlers learn how to think about and frame machine learning problemsdevelopers.google.com

How does it work, technically?

This is a question which can be answered in many ways and is somewhat unique per case. In general you should have a pragmatic view of ML as just one piece in a bigger puzzle. A very generic overview of this process could look like this:

- You will have some kind of data ingestion (input) happening which is likely to be automated. This could be an event stream (like data, logs, cloud events) or a click stream (like Google Analytics events).

- The model is built according to some statistical algorithm. It takes in information which is cleaned (“prepped”) to be representative for correct and intended use. This part is also where “feature engineering” takes place, which is the process the aims at shaving away less relevant parts of your data.

- Your application (or similar) will ask for a prediction for a bit of information. The prediction happens on the pre-built model, returning the outcome.

- The next step of your pipeline (for example an application) will consume that prediction data and do something relevant with it (i.e. application code happens now), for example accepting or denying a purchase order based on whether or not the order is predicted to be a fraudulent purchase.

For the tech-oriented of you, the use context could be essentially anything. Common use contexts are online REST APIs or microservices easily accessible to a web app, or in the case of robots or offline devices, it could be loaded onto the actual device/hardware itself (i.e edge computing).

Machine Learning types

The main publicly-visible areas that ML works with are:

- Natural language processing: Understanding text and audio. This is what powers your wiretap—strike that, I mean—Google Assistant or Amazon Alexa.

- Vision: Inferring something from images, like reading text from an image, or tagging/labeling what the ML recognizes in a photo

- Data: Finding insights in historical data; used for example to predict spam, next sentences or points-of-interest or predicting stock price fluctuations

And in short, there are three primary ML learning types:

- Supervised learning: You teach it what to look for/decide on with examples

- Unsupervised learning: The ML tries to find and sort out items based on similarities

- Reinforcement learning: The ML teaches itself how to do something, common in robotics or game AI like Deep Mind

Play around

Before doing anything deeper, let me recommend a few entry-level links that neatly visualize a bit of what’s going on with those crazy algos.

What-If Tool

“The What-If Tool makes it easy to efficiently and intuitively explore up to two models’ performance on a dataset. Investigate model performances for a range of features in your dataset, optimization strategies and even manipulations to individual datapoint values. All this and more, in a visual way that requires minimal code.”

you could inspect a machine learning model, with minimal coding required? Building effective machine learning models…pair-code.github.ioTensorflow - Neural Network Playground

It's a technique for building a computer program that learns from data. It is based very loosely on how we think the…playground.tensorflow.org

Emoji Scavenger Hunt (made with TensorFlow.js)

“Locate the emoji we show you in the real world with your phone’s camera. A neural network will try to guess what it’s seeing.”

Mini project ideas using developer-friendly APIs

All of the below ideas we’ve done as small toy projects in the last couple of years at Humblebee.

Case 1: Watson sentiment lamp

One of our former Technical Designers at Humblebee, Paul Aston, decided to try sentiment analysis. It just happened that I had a LIFX wifi-connected light bulb hanging around. The lamp itself is just a glorified LED lamp until you hook it to some code of your own. Paul made the connection–pun intended–to use it with Slack, so we now have a lamp that displays the mood in our “random” channel as a color.

The lamp is a LIFX Mini Color, which is a “smart lightbulb” with a WiFi connection.

The LIFX Mini range is our most compact light yet, available in vivid colour, tunable white or 2700K warm white.eu.lifx.com

The lamp uses IBM Watson Tone Analyzer to infer the mood.

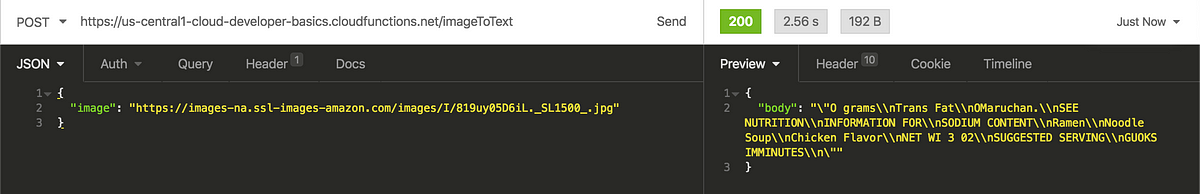

Case 2: Text-from-image and translate it into English

Reading text from images and translating it to another language is something most of us Asian food lovers would probably love when our meager Japanese skills fail us. Together with a fellow developer, we wrote a small demo for that type of use case, shared below.

This is a simple demo to translate text (Cloud Translation API) and reading text from an image (Cloud Vision API). It…github.com

For this, we used Google Cloud Vision API. See the link below for more info, how to get started, and to try it out live on the page!

Cloud Vision API provides a comprehensive set of capabilities including object detection, ocr, explicit content, face…cloud.google.com

Here’s a noodle package (though with English text) transcribed for us:

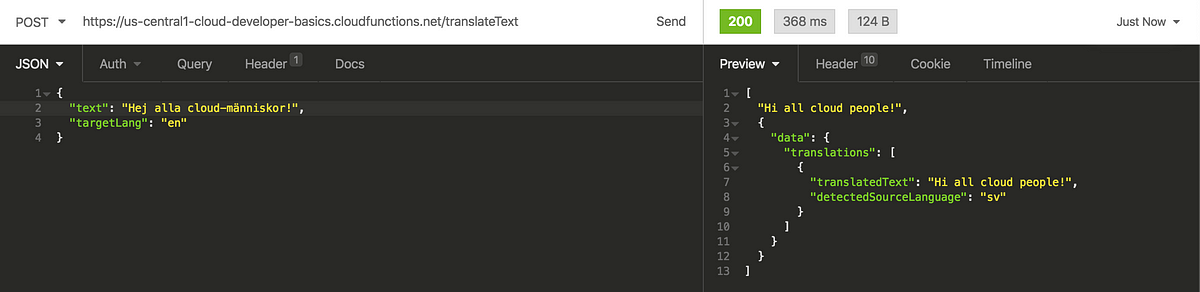

And a friendly greeting in Swedish (inferred automatically) and translated to English:

The following Google tutorial shows you something pretty similar to the above demo.

Case 3: Dog or cat?

This one is probably my favorite! 😃 Pass in an image of your good boy or your old household lion and see if the algo can detect the species correctly.

Unfortunately our online demo is no longer available, as it costs a bit of money to deploy and run the machine learning node. The below code should give you a starting point to set it up for yourself! (Note: Your mileage may vary with this code base).

Use AutoML Vision the determine if an image is of a dog or a cat. Contains both backend code and a frontend that uses…github.com

For this demo we used the (then) brand-new Google Cloud AutoML Vision. It’s an amazing service, and it lets even a complete dunce train a custom image classifier with high precision rate.

Cloud AutoML helps you easily train high quality custom machine learning models with limited machine learning expertise…cloud.google.com

Note that you may have to enable the API for Cloud Vision API first. Google treats you to 40 “node hours” that should cover a simple model and a few hours of serving. ML nodes and training is not very cheap so beware of the costs when going in.

Further documentation is at:

Imagine you work with an architectural preservation board that's attempting to identify neighborhoods that have a…cloud.google.com

Case 4: Image Recognition API

A prominent part of the Cloud Developer Basics course I’ve been holding, the use case of having an image recognition API without having to do any of your own training is probably something you’ve always wanted.

Like AutoML Vision, the Google-hosted ready-to-use Vision API is a beast that you have to try out.

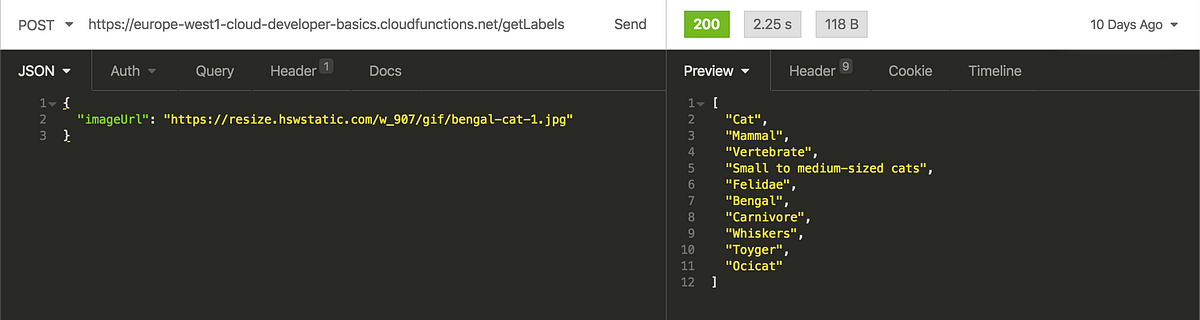

Let’s pass it a good-looking kitty cat:

Doing it like so:

And you get a list of labels that it detected when looking at the image. The key benefit with this one over AutoML Vision is that this requires zero training and no running hosting cost. “It just works”, as you’ve heard.

There’s source code for an ML-driven image recognition service for GCP here, or the same one built for Azure here. These two require accounts on Google Cloud Platform or Microsoft Azure, respectively, and that you are familiar with how to deploy code with Serverless Framework.

Moving on to the next level

Done with implementing the above projects and voraciously read and watched all the material? You’re hot 🔥 tonight! This is where to look next.

Program and train your first Machine Learning algorithm

TensorFlow is one of the biggest and most well-used libraries.

A WebGL accelerated, browser based JavaScript library for training and deploying ML modelsjs.tensorflow.org

Google has a fair bit of starting material, in their super video series AI Adventures. More of a web person? This one is specifically for TensorFlow.js, the web version of TF:

You should also look into ML5JS! It’s built on top of TensorFlow.js but is friendlier. It’s also got a bunch of examples to look at.

Learn

Kaggle is a fantastic resource for learning about applied machine learning. Look at Python, Intro to Machine Learning, Data Visualization, Pandas, and Feature Engineering. Try out the libraries Matplotlib and Seaborn if you can, as those get a lot of attention.

Take some time to check MJ Bahmani’s 20 ML Algorithms from start to Finish as that one gives you an idea of how practical ML coding can look.

For a fantastic overview of “cheat sheets” on how to work with common ML libraries, look no further than Stefan Kojouharov’s Medium article Cheat Sheets for AI, Neural Networks, Machine Learning, Deep Learning & Big Data.

Watch

A quick, easy, digestible start is Google’s AI Adventures video series on Youtube:

If you are willing to spend some money, consider Egghead and the following courses:

- Hannah Davis’s Introductory Machine Learning Algorithms in Python with scikit-learn

- Chris Achard’s Fully Connected Neural Networks with Keras

- Will Button‘s Introduction to the Python 3 Programming Language

Read

- If there is ”the one” that I really liked, it would be Adam Geitgey’s Machine Learning is Fun

- A Medium series I would recommend is Vishal Maini’s Machine Learning for Humans which goes into a good amount of (easy-to-understand) detail, placing most of the focus on the practical types of ML you will encounter.

- Getting your bearings right is important. I found Google’s Rules of Machine Learning to be good in providing a grounding.

- Still not done any Python learning despite hearing about it 1982476 times already in this article? Then this article at FreeCodeCamp gives you a good overview.

Buy

- Aurélien Géron’s Hands-On Machine Learning with Scikit-Learn and TensorFlow

- Valliappa Lakshmanan’s Data Science on the Google Cloud Platform

OMG 🌋 Even…more…resources…

- Elements of AI

- Google AI Education

- Google: Rules of Machine Learning

- Google: Machine Learning Crash Course

- Paperspace: Various tutorials

Get to it!

Machine learning is a new skill that I believe many developers are going to love working with. It’s a completely different way of solving problems you may have already been working on, but offers a very different way of solving them.

With that: No time like the present. Hope the above inspired you to take your first steps in learning ML and applying it to the real world!